Black Man Falsely Arrested Based On AI Tech

NYPD Falsely Arrests Black Man Based On Faulty Facial Recognition Technology, Activist Groups Want Investigation

Once again, facial recognition technology is out here getting Black men arrested for crimes they didn’t commit, all because algorithms also apparently think all Black people look alike.

According to ABC 7, civil rights and privacy groups are demanding an investigation into the NYPD’s use of facial recognition technology after a Black man was allegedly arrested and jailed for two days based on a false identification.

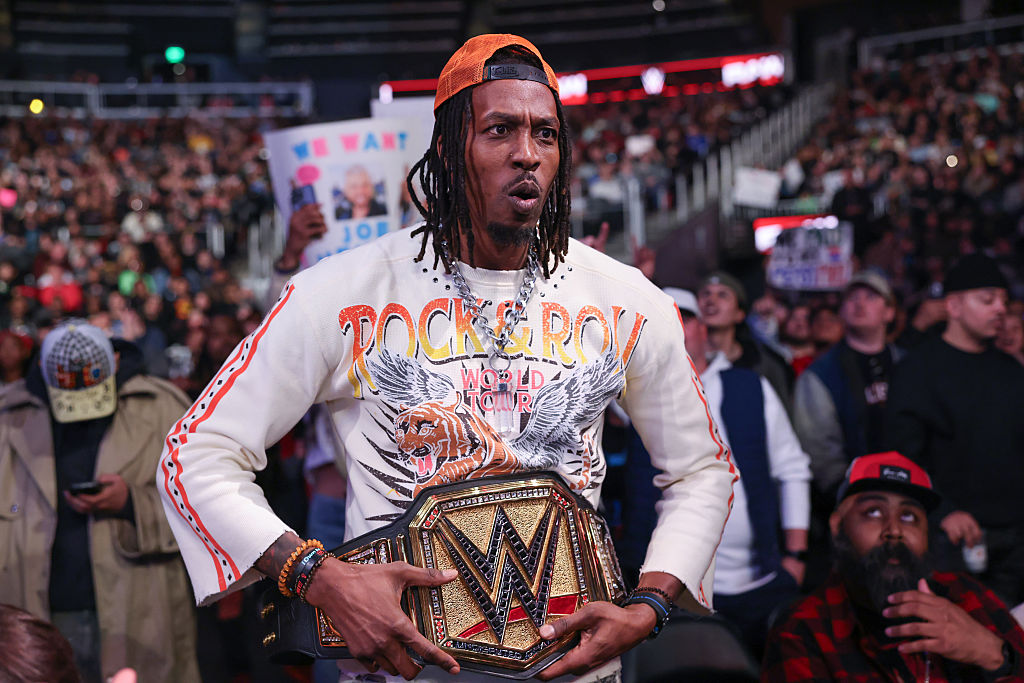

Trevis Williams told ABC that the actual criminal the NYPD was after looked nothing like him, outside of the fact that they are both Black men with similar hair.

“I was so angry … I was stressed out,” Williams said. “The man they were looking for — he was eight inches shorter than me and 70 pounds lighter.”

According to the New York Times, the arrest stemmed from a 911 call made in February by a woman who told the police that a deliveryman had exposed himself to her in a Manhattan building. She reportedly described the man as being 5 feet 6 inches, which makes it odd that officers arrested Williams about two months after the incident was reported, considering he stands at 6 feet 2 inches.

From the Times:

The man, Trevis Williams, was driving from Connecticut to Brooklyn on the day of the crime, and location data from his phone put him about 12 miles away at the time. But a facial recognition program plucked his image from an array of mug shots and the woman identified him as the flasher.

Like Mr. Williams, the culprit was Black, had a thick beard and mustache, and wore his hair in braids. Physically, the two men had little else in common. Mr. Williams was not only taller, he also weighed 230 pounds. The victim said the delivery man appeared to weigh about 160 pounds. But Mr. Williams still spent more than two days in jail in April.

“In the blink of an eye, your whole life could change,” Mr. Williams said.

The algorithms that run facial recognition technology can outstrip fallible human eyewitnesses, and law enforcement agencies say the results are not decisive on their own. But the case against Mr. Williams, which was dismissed in July, illustrates the perils of a powerful investigative tool that can lead detectives far astray.

“This could have very easily been solved by just really traditional police work,” said Diane Akerman, Staff Attorney with the Digital Forensics Unit at the Legal Aid Society (Legal Aid), one of the groups urging authorities, including Inspector General Jeanene Barrett, to launch an investigation into the police department’s use of facial recognition tech.

Of course, NYPD officials told ABC that its use of this software comes with legal regulations and that the technology has a proven success rate that eclipses the number of times it has been wrong and led to false arrests. The department also noted that “even if there is a possible match, the NYPD cannot and will never make an arrest solely using facial recognition technology,” begging the question: What else was Williams’ arrest based on?

More from the Times:

On April 21, the police caught Mr. Williams entering the subway through an exit gate in Brooklyn, and learned he was wanted for questioning in the Feb. 10 episode. They took him into custody.

When they interrogated him, Mr. Williams told the police that he had started making deliveries for Amazon on April 1. He explained he had worked in an Amazon warehouse during the Covid-19 pandemic and in Atlanta, but that at the time of the crime, he had been working his Connecticut job with autistic adults.

“That’s not me, man,” he said when the police showed him surveillance images of the flasher. “I swear to God, that’s not me.”

“Of course you’re going to say that wasn’t you,” the detective responded. He then asked what would happen if he pulled Mr. Williams’s employment records.

“Pull it,” Mr. Williams replied. “Please look it up.”

The police charged him the following day.

“The victim positively identified Mr. Williams,” said Brad Weekes, a spokesman for the Police Department. Mr. Weekes said the victim had told detectives she was “confident that was the same person, and only then was probable cause established to make an arrest.”

He said the victim’s photo identification, the questioning of Mr. Williams and his work history at Amazon were all part of the investigation. The police did not contact Amazon to find out the identity of the delivery man.

So, it appears the false identification and the fact that Williams worked for Amazon, which the police didn’t even bother to confirm with the company, were all that was needed for Williams to be falsely arrested and charged. Perhaps it’s true that the cops don’t make arrests “solely using facial recognition technology,” but it doesn’t appear that much else is required. In fact, Weekes claimed emphatically that it was “factually inaccurate” to say that the police had made false arrests based on the technology, even as it appears to largely be the case when it comes to Williams.

“Everyone, including the NYPD, knows that facial recognition technology is unreliable,” Akerman said. “Yet the NYPD disregards even its own protocols, which are meant to protect New Yorkers from the very real risk of false arrest and imprisonment. It’s clear they cannot be trusted with this technology, and elected officials must act now to ban its use by law enforcement.”

Williams said the negative impact of his arrest didn’t end once the charges were dropped.

“I was in the process of becoming a correctional officer at Rikers Island,” he told ABC, adding that “they kind of froze the hiring process” after his arrest.

“I hope people don’t have to sit in jail or prison for things that they didn’t do,” Williams said.

SEE ALSO:

Suffolk County Police K-9 Gets Loose And Attacks Black Man Outside Of Slain NYPD Detective’s Funeral

Black Man Files Lawsuit Accusing NY Cop Of Harassing, Arresting Him For Honking Horn At Green Light